NVIDIA unveiled its Tegra K1 processor at CES 2014. The 32-bit version began to make its way into devices in the first half of the year but we have yet to see the 64-bit version appear in devices. In a blog entry, NVIDIA today indicated that we may not have to wait much longer though:

NVIDIA unveiled its Tegra K1 processor at CES 2014. The 32-bit version began to make its way into devices in the first half of the year but we have yet to see the 64-bit version appear in devices. In a blog entry, NVIDIA today indicated that we may not have to wait much longer though:

Look forward later this year to some amazing mobile devices based on the 64-bit Tegra K1 from our partners. And for hard-core Android fans, take note that we’re already developing the next version of Android – “L” – on the 64-bit Tegra K1.

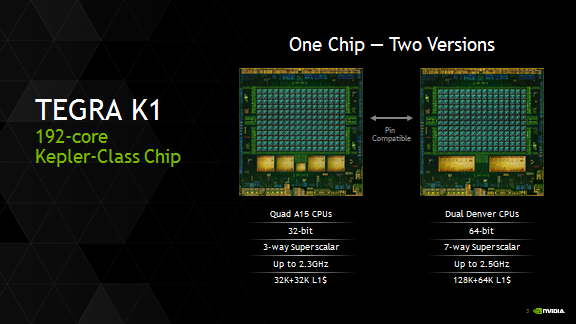

The NVIDIA Tegra K1 Denver processor shares much in common with the 32-bit version but there are a number of differences as well. Whereas the 32-bit version comes with quad Cortex-A15 cores, Denver comes dual 64-bit ARMv8 architecture compatible cores that can run up to a slightly faster 2.5GHz. Despite having only two cores, they promise to deliver “significantly higher performance than existing four- to eight-core mobile CPUs on most mobile workloads.”

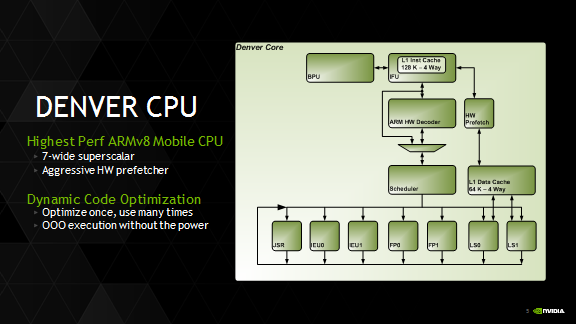

Each core implements a 7-way superscalar microarchitecture allowing up to 7 concurrent micro-ops to be executed per clock cycle and includes a 128KB 4-way L1 instruction cache and a 64KB 4-way L1 data cache. A 2MB 16-way L2 cache also services both cores.

Denver also supports a process called Dynamic Code Optimization that “optimizes frequently used software routines at runtime into dense, highly tuned microcode-equivalent routines.” Stored in a 128MB dedicated optimization cache, they do not need to re-optimized each and every time they are needed. This promises t

What does this all mean for the average user? NVIDIA promises that mobile devices using the 64-bit Tegra K1 chipset will “offer PC-class performance for standard apps, extended battery life and the best web browsing experience.”

The oft-rumoured Google Nexus 9 built by HTC could be among the first to be powered by the 64-bit NVIDIA Tegra K1 chipset.

Source : NVIDIA